Improving VR Video Quality with Alternative Projections

Matthew Wellings 10-Feb-2016

In my previous blog posts I covered how to make a (non real-time) VR video recording of an OpenGL Cardboard game that can be uploaded to YouTube. I also covered how to play this format inside your OpenGL apps and games. Now that we have control over the full rendering/playback pipeline it is possible to start experimenting with the way we store these files and see if we can make any improvements to the mapping.

The current projection that YouTube accepts is a modified equirectangular projection. This projection uses a linear mapping of the spherical co-ordinates of visible objects (their latitude and longitude on a virtual sphere) to points on the video.

This projection method is very simple to play back and fairly straightforward to produce. One effect is that vertical columns of pixels in the video both correspond to meridians on a sphere and to a single camera position when rendering. This allows us to render the scene as simple strips, rotating and translating the camera for each strip. Many other projections such as a cube map would reduce distortion in the polar areas (above and below you) but would make the relationship between camera position and the point on the video more complex.

Rather than changing to a completely different projection it may be better to try to improve upon this one. A notable problem with the equirectangular is that it assigns few pixels to the equatorial region (the area in front of and around the viewer) and many pixels to the polar areas. This seems to be a waste as it is the equatorial area that it is likely to contain what the user wants to watch. Many VR videos currently on YouTube blur out the polar areas completely. This may be because the Jump camera probably cannot film those areas.

In order to assign more pixels to the equatorial areas of the image we could take the sine of the latitude. This will sacrifice resolution near the poles but improve it near the equator. This type of projection is called a cylindrical equal-area projection. Versions of it are used to make political maps that show countries of the world in correct proportion to each other. (Traditional projections make Africa and South America look smaller in proportion to, say, Europe and North America). Using a cylindrical equal-area projection will not however make the whole image the same resolution. As we look towards the poles the image will exchange vertical resolution for horizontal resolution, this lack of vertical resolution may be noticeable, although you might choose not to render this part.

These images show how equally spaced parallels and meridians are projected:

Modifying the renderer

In order to produce video in this format using the method I have described in Creating VR Video Trailers for Cardboard games we simply modify the strip shader to become:

precision mediump float;

uniform sampler2D s_texture;

uniform float u_Trans;

uniform int u_reverse;

varying vec2 v_TexCoord;

#define M_PI 3.1415926535897932384626433832795

void main() {

//float phy = v_TexCoord.y * M_PI / 2.0 - M_PI / 4.0;

float y=v_TexCoord.y;

if (u_reverse==1) y = 1.0-y;

float phy = asin(y) - M_PI / 4.0;

float perspective_y = (tan(phy) * 0.5 + 0.5);

if (u_reverse==1) perspective_y = 1.0-perspective_y;

gl_FragColor = texture2D(s_texture, vec2(v_TexCoord.x, perspective_y));

}

If you look at the shader for equirectangular projection you will notice that this one requires an additional uniform (u_reverse) This is because this projection, unlike the equirectangular projection, is not (within each hemisphere) symmetrical about the 45th parallels. You can initilise this uniform by calling the following before glDrawArrays() in the function generateVideoFrame().

int stripReverseParam = GLES20.glGetUniformLocation(stripProgram, "u_reverse");

GLES20.glUniform1i(stripReverseParam, upDown);The rendering function sets upDown to 0 when looking up and 1 when looking down.

Full code for generating VRVideos using this projection is on GitHub.

Modifying the player

In order to correctly play these videos a player based on the one described in my post Playing VR Videos in Cardboard Apps would need a simple modification:

videoTextureCoords[texIndex + 1] = (float) parallel / (float) (numParallels); //EquirectangularMust become:

videoTextureCoords[texIndex + 1] = (-(float)Math.cos ( angleStep * (double)parallel ) + 1f) /2f;Visual Results

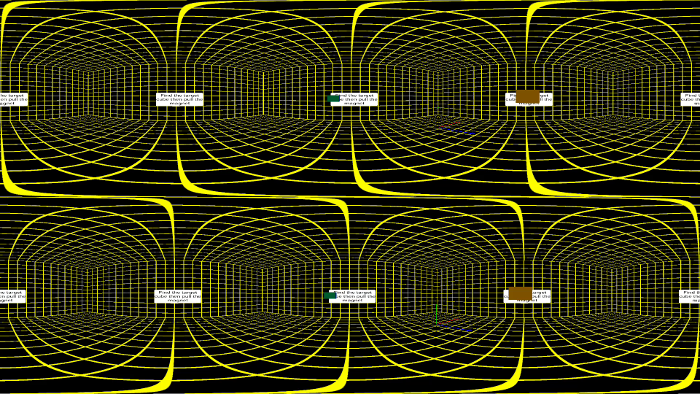

You can see the difference in the projections in these video stills (click for full size):

1080s Equirectangular:

1080s Cylindrical Equal-area Projection:

You can clearly see how more of the image is used to store the area with the text in the Cylindrical Equal-area Projection, the image is less squashed in that area. You can of course get this increase in vertical resolution by increasing the vertical size of the video (Aspect ratio is irrelevant with this type of video). Increasing the size of the video will consume bandwidth, processing power and battery power. Changing the projection will not affect any of these but instead sacrifices resolution in the polar areas which can be seen at the top and bottom of the above images.

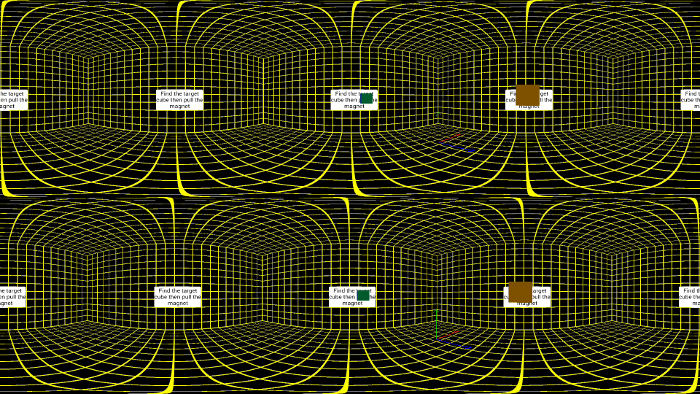

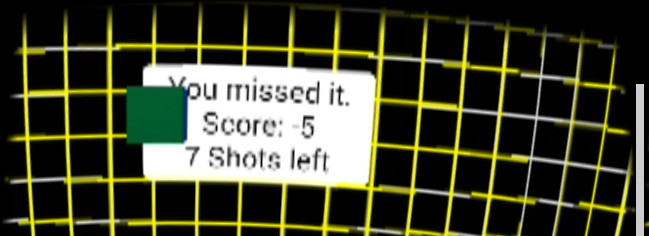

The visual improvement can be seen in these screenshots.

Screenshot of 1080s Equirectangular playback (Click for full image):

Screenshot of 1080s Cylindrical Equal-area Projection playback:

Possible Further Improvement

If you are planning on not rendering the polar areas you may wish to scale this projection vertically to remove the top and bottom sections. This method will give you an even higher resolution in the equatorial religion and, providing that you scale the range rather than the domain, prevent there being an area of low vertical resolution near the polar circles. It may then be possible to blur the top and bottom row of pixels so that when playing back this row could be stretched to the poles.

Further Reading

Rendering Omni‐directional Stereo Content Google Inc.

Comments

Show Comments (Disqus).

Matthew Wellings - Blog

Depth Peeling Pseudo-Volumetric Rendering 25-Sept-2016

Depth Peeling Order Independent Transparency in Vulkan 27-Jul-2016

The new Vulkan Coordinate System 20-Mar-2016

Improving VR Video Quality with Alternative Projections 10-Feb-2016

Playing VR Videos in Cardboard Apps 6-Feb-2016

Creating VR Video Trailers for Cardboard games 2-Feb-2016

Playing Stereo 3D Video in Cardboard Apps 19-Jan-2015

Adding Ray Traced Explosions to the Bullet Physics Engine 8-Oct-2015